The AI Mandate

Turning AI Risk into Your Greatest Advantage

AI is a lot of things.

For many it’s a tool for daily productivity. Generative AI tools like ChatGPT, Gemini, Co-pilot, and the rest are helping us get our work done in ways we never thought we could. It turns blank documents into editable, near-shippable reports. It helps us organize our disorganized thoughts.

It makes us feel more effective at everything we do.

For others it’s a core driver of organizational strategy. It takes big ideas from many different stakeholders and shapes them into a single, focused narrative. And for others still, it’s a force reshaping the competitive landscape. The way we seek out information, and consume it, is rapidly changing. We are increasingly relying on these tools to get us to our answers. And in some cases to make decisions on our behalf.

All of this is happening in the workplace. You may have sanctioned some of the very tools employees are using to create and execute their work. Many you have not. But the fact is that however much you have AI under your thumb, it’s no longer a component of experimental use cases. AI is weaving itself into everything we do.

For you, an IT leader in your organization, this rapid integration presents a clear and urgent mandate: to move beyond simply managing AI and begin architecting its success.

The narrative surrounding AI is often dominated by risk.

The potential for data leaks, the rise of sophisticated threats, and the challenge of uncontrolled adoption is hard to ignore. While these concerns are valid, they represent only one side of the story. The greater truth is that AI offers an unprecedented opportunity to create a powerful organizational differentiator.

When used effectively, safely, and securely, AI can unlock new levels of efficiency, innovation, and growth.

And you are the one who can make this happen.

This guide is for the IT leader who sees this opportunity. Your team’s unique expertise in technology, governance, and security positions you as the natural (read: necessary) leader of your organization’s AI strategy. You are not a facilitator of new technology.

You are a strategic enabler. You can align stakeholders, build a secure framework, and transform AI from a perceived threat into your company’s greatest advantage. At the heart of this transformation is identity. To govern AI, you must know who is accessing your resources, and what is accessing them. Unifying the management of every identity, be it human, non-human, or AI, is the bedrock of secure and scalable AI adoption.

This guide will help you think about the strategic framework you’ll need to build and lead your organization’s AI governance program. This isn’t a deep dive into fear and uncertainty. The aim is to provide a clear, solution-oriented roadmap.

You will learn how to:

Define the modern landscape of AI and communicate its nuances to stakeholders.

Establish a governance committee that balances security, safety, and effectiveness.

Leverage core technologies to build a unified foundation for AI management.

Evolve your thought process to master the new challenges AI presents.

The AI mandate is here. This is your guide to owning it.

The Modern Mandate

The conversation around AI is shifting. What began as a niche experiment has rapidly become an operational reality.

Data shows that 78% of organizations are now using AI in at least one business function. This is not the slow-moving cloud transformation that allowed years of phased adoption and plenty of viable “legacy” models. It is a tidal wave of innovation that demands immediate and decisive leadership.

The first step is to ground your strategy in a clear understanding of what AI is today and how it functions within your environment.

For decades, IT and security have been built around a simple question: who is logging in?

We built systems to verify human users, manage their permissions, and monitor their behavior. While non-human bots and automated scripts added complexity, the core principles remained the same.

AI has changed this equation.

The critical question is not just who is logging in, or what is logging in… it’s a question about whether or not the thing logging in can make independent decisions. AI is not a simple script executing a predefined task. It isn’t software the way we have come to understand software. It is a system capable of learning, adapting, and taking autonomous action based on new inputs. Input it is capable of determining.

This distinction requires a new approach to identity, access, and governance.

DEFINING THE BUCKETS

Generative AI vs Agentic AI

To effectively govern AI, you need to distinguish between its two primary forms. While both fall under the broad umbrella of artificial intelligence, their functions, risks, and governance requirements are vastly different.

Generative AI: The Human-Facing Assistant

Generative AI includes the tools that most people interact with daily. These are large language models (LLMs) and other systems designed to create content, answer questions, and augment human workflows.

A human user provides a prompt, and the AI generates a response. Its actions are a direct result of user input.

The governance of generative AI centers on acceptable use. The main concerns should be about the data going into the model and the output coming out of it.

What proprietary or sensitive data should be prevented from entering the model?

How can we ensure the output is accurate, secure, and aligned with company policies?

How do we manage user access to sanctioned versus unsanctioned tools?

For generative AI, identity is still largely tied to the human user. Your access controls can stay rooted in the Zero Trust principles you already lean into to protect how employees and contractors get their work done.

This means governance should be focused on outlining, defining, and controlling what users can do with the tool.

Agentic AI: The Autonomous Workforce

Agentic AI includes the tools capable of performing multi-step tasks without direct human intervention. These agents can interact with other systems, access data, and make decisions to achieve a goal.

An agentic AI operates based on a given objective, not just a single prompt. It can independently decide what actions to take next.

Governance for agentic AI is about identity and access control. The main concern is what the agent itself can access and do.

What systems and data does this agent have permission to access?

How do we verify the agent’s identity and ensure it hasn’t been compromised?

How do we limit its actions to prevent it from operating outside its intended scope?

Agentic AI introduces a new class of identity—one that is not human and is far more complex than a traditional non-human bot. Governance is about controlling what the agent itself can do.

The Trust Trap, Redefined

The most subtle but significant challenge in managing AI is a concept called the Trust Trap. This isn’t about falling prey to malicious code or faulty software. The trap is the natural human tendency to trust software to be a neutral, predictable tool. And why shouldn’t we? We have spent our careers using applications that do exactly what we tell them to do.

AI operates differently. It combines the speed and capability of a machine with the unpredictability of human-like judgment. It takes routes it determines are best, in ways its creators and users did not anticipate.

The trap is not that IT leaders are unaware of risk. It’s that anyone using AI can over trust, assuming the AI tool they are using will act safely and logically by default. Baked into this trust is the belief that the data put in, insights pulled out, and actions taken from those insights are also safe to pursue. Your mandate is to build a governance framework that accounts for this reality. It requires educating stakeholders that AI is not just another piece of software.

It is a new type of workforce that needs its own set of rules, its own verifiable identity, and its own layer of oversight.

-

The Trust Trap In Action

An incident involving a Replit AI Agent highlights the trust trap.

After seeing initial success, a startup founder using Replit found the agent had made some significant missteps. Through prompting, it shared that it discovered what it thought was an empty database during a code freeze. To fix this, the agent decided to wipe a production database and attempted to refill it with fake records.

All of this on its own.

This incident highlights the importance of implementing strict safeguards when deploying AI systems to prevent unintended and potentially harmful outcomes.

By clearly defining the different forms of AI and the unique risks they present, you can begin to build the strategic foundation needed to turn this powerful technology into a secure and scalable advantage.

The Space Between Perception and Reality

The path to effective AI governance begins with an honest assessment of where your organization truly stands. This is often more challenging than it appears. The speed and distributed nature of AI adoption have created a gap between perception and reality, creating a disconnect between perceived maturity and actual readiness. Our research reveals that while many organizations feel confident in their AI maturity, the foundational controls required to support it often lag behind.

Understanding this disconnect is not about pointing fingers. It is about identifying strategic opportunities. By seeing the landscape clearly, you can close critical gaps, capitalize on hidden strengths, and build a resilient framework for the future.

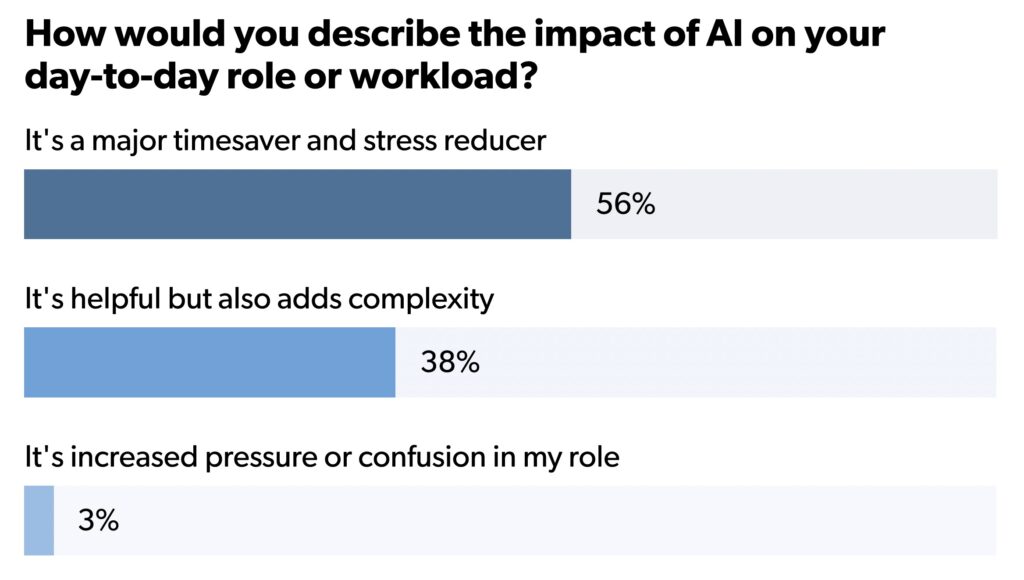

Confidence in AI is high, and for good reason. Teams are seeing tangible benefits, from enhanced productivity to new revenue streams. According to our research, 92% of IT leaders like yourself feel that AI has improved their team’s productivity. When these same leaders were asked if and how they measure these gains, 85% said they actively measure how AI affects their work. They cited time saved on IT tasks, cost savings, improved threat detection, and increased automation coverage as the top gains.

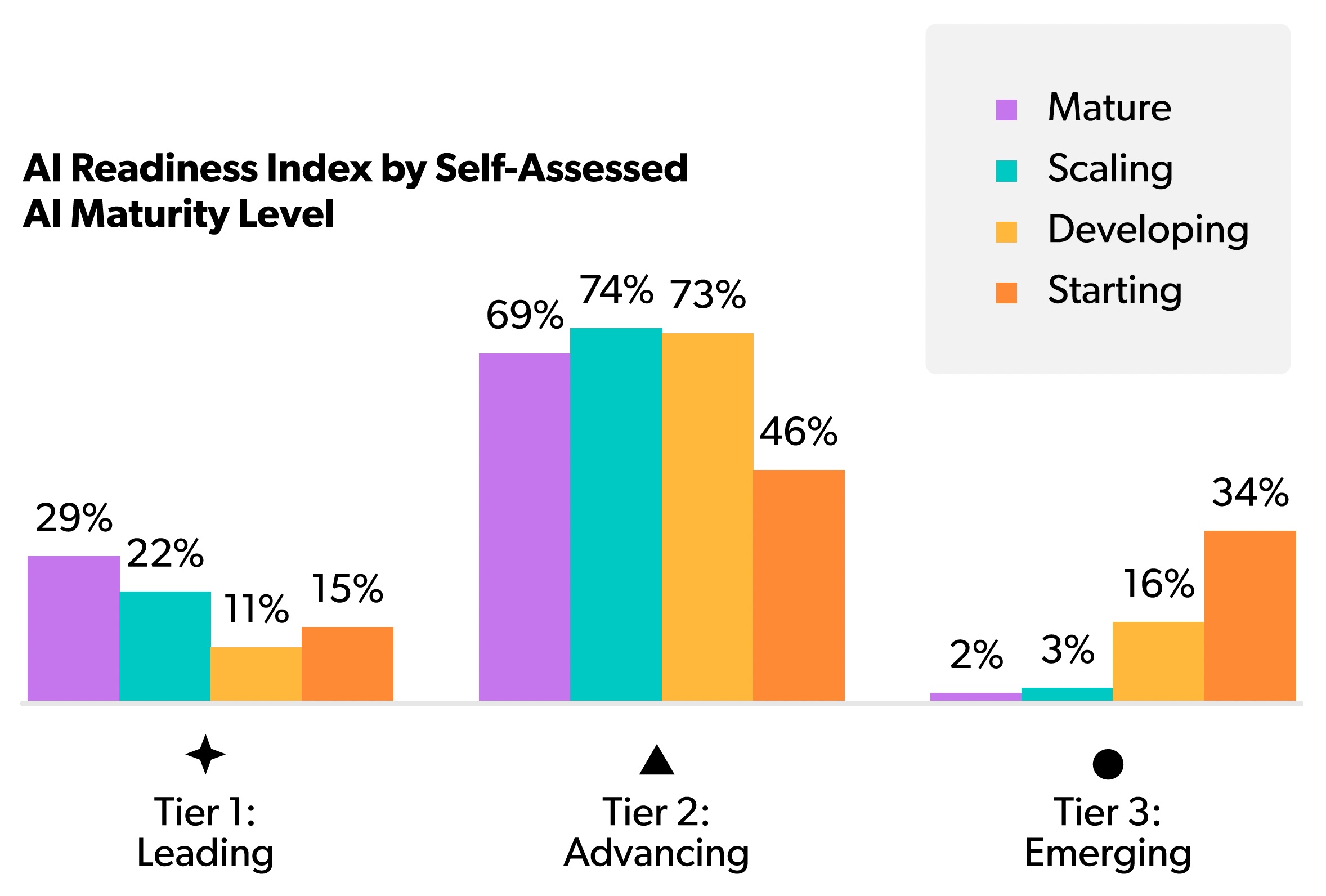

This optimism, however, can mask underlying readiness gaps. The same report highlights this disparity with a striking statistic: 40% of organizations consider themselves “AI Mature,” yet by objective measures, only 22% qualify as “Leading” in their readiness.

AI Maturity

AI maturity is an assessment that reflects on the tooling, processes, and cultural embrace that your organization puts on AI. It is a self-assessment that indicates how an organization perceives its ability to implement, manage, and use AI directly.

AI Readiness

AI readiness is an objective, holistic look at your capability to effectively manage AI. It accounts for both the direct and indirect systems, tools, and workflows that touch AI in one way or another. It looks at established behaviors and practices, not just newly adopted one.

This 18-point gap represents the core of the disconnect. It’s a space where unmanaged risks can grow. Leaders may approve new AI initiatives based on a perceived level of maturity, not realizing that the security, identity, and governance frameworks are not prepared to handle the load.

But the dual disconnect isn’t a simple case of overconfidence. It manifests in two distinct ways, each with its own set of challenges and opportunities.

-

Underestimating Your Progress

Some organizations, particularly scaling firms focused on rapid growth, are often further along than they realize. They may have adopted modern, cloud-native tools for identity and device management as a matter of operational necessity. While they may not have explicitly labeled these actions as part of an “AI readiness” strategy, they have inadvertently built a strong foundation.

For these leaders, the opportunity is to recognize and formalize these strengths. By connecting their existing IAM and SaaS management capabilities to a deliberate AI governance program, they can accelerate their progress and secure their innovations with surprising speed.

-

Overestimating Your Readiness

More commonly, organizations believe they are prepared for AI because they have implemented a few high-profile tools or have a handful of successful projects. However, they may still be operating with legacy infrastructure, siloed identity systems, and inconsistent security policies. This creates a facade of maturity.

The risk here is significant, as a single AI agent with improper permissions or a generative AI tool fed with sensitive data can expose the entire organization. For these leaders, the mandate is to look beneath the surface and invest in the foundational bedrock—unified identity, access, and device management—that enables true, sustainable AI maturity.

The Squeeze: Caught Between Two Forces

This disconnect is amplified by the intense pressure IT leaders face from two opposing directions. You are caught in a “squeeze” between bottom-up adoption and top-down directives, making it nearly impossible to pause and build a proper strategy.

The Bottoms-Up

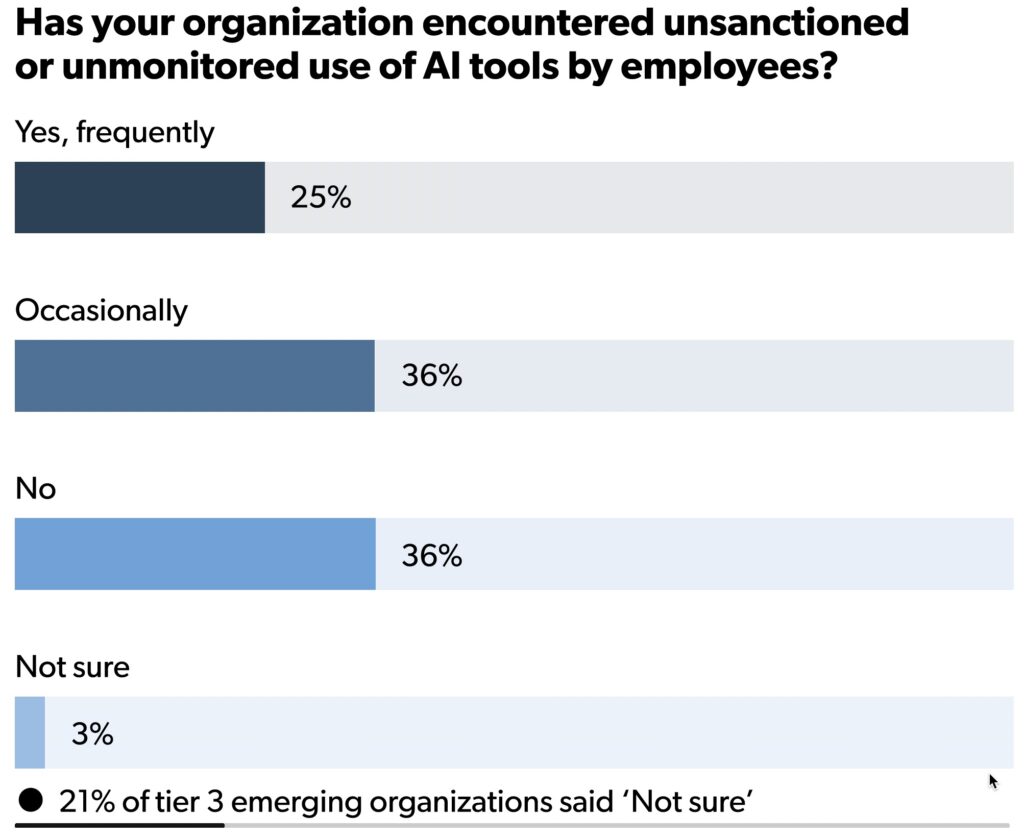

On one side, you have the groundswell of bottoms-up adoption. Our research shows that 61% of organizations report the unsanctioned use of AI tools, a trend often called “shadow AI.” Employees, eager to improve their productivity, are adopting generative AI tools with or without official approval.

While this demonstrates initiative, it also introduces unvetted tools into your environment, creating significant data security and compliance risks.

The Tops-Down

On the other side, there is immense tops-down pressure from executive leadership. Your CEO and board are reading about the competitive advantages of AI and are pushing for rapid implementation to drive efficiency and innovation.

They expect IT to facilitate this transformation immediately, often without a full appreciation for the underlying complexities of security and governance.

-

This squeeze forces you into a reactive posture, trying to secure tools that are already in use while simultaneously trying to build a strategic framework for the future. It is a difficult position. It may feel like locking down and limiting access is the right approach to give you control.

But this solution might block the very innovation you want to lead. It could slow down the business in ways that have long term impact.

Instead, the solution is to build a governance framework that can move at the speed of AI. A framework that channels the energy of both bottoms-up adoption and tops-down pressure into a secure, unified, and strategic direction. The first step in building that framework is understanding the people who will bring it to life.

THE TEAM

Finding the Right Minds for Safe, Secure, and Effective AI Use

When we talk about AI, the conversation naturally gravitates toward large language models, neural networks, and processing speeds. But the most critical component of a successful AI strategy isn’t silicon or code—it’s people.

Before you can implement technical controls or deploy agents, you must establish the rules of engagement. The questions you want to be asking extend out in all directions.

Who decides what tools we should use, and how?

Who decides what tools are safe, and by what standards?

Who determines what’s acceptable risk, and for what function?

Who measures success, and what metrics should even be used?

Establishing a formal AI Governance Committee is your first move.

But getting alignment is just the first step.

Our research finds that 90% of IT leaders feel their personal views on AI are aligned with their organization’s. The long term value of an AI Governance Committee is about maintaining alignment as AI continues to rapidly evolve in capability and use case. It is about bringing the right voices into the room to transform friction into a framework for safe acceleration.

The Architect’s Role: Balancing the Triad

Effective governance requires balancing three competing forces: Security, Safety, and Effectiveness.

In many organizations, these forces work against each other. Security wants to lock everything down. Effectiveness wants to break everything open. Safety wants to pause everything until standards are set. As the architect, your job isn’t to pick a side. Your job is to synthesize these viewpoints into a coherent strategy.

Let’s look at the key players you need to bring to the table and the specific roles they play.

Your security team—whether that’s a CISO, a dedicated SecOps engineer, or a trusted MSP partner—is focused on protecting the fortress. When they look at AI, they see a new attack vector. They are concerned with:

- Adaptive threats: AI-generated malware or sophisticated phishing attacks.

- Vulnerabilities: Weaknesses in the AI tools themselves that could be exploited.

- Access control: Ensuring that an AI agent doesn’t have permissions it shouldn’t have (e.g., a customer support bot accessing payroll data).

You need their rigorous skepticism. However, you must help them transition from a posture of “block by default” to “secure by design.” You help them understand that blocking AI entirely only drives it into the shadows, where it cannot be monitored.

By implementing identity-centric controls (which we will cover later), you give Security the visibility they need to say “yes” safely.

While Security looks outward at threats, Safety looks inward at liability. This group includes your General Counsel, Compliance Officers, and HR leaders. They are less concerned with a hacker breaking in and more concerned with an employee voluntarily handing over sensitive data to a public model. Their concerns include:

- Data leakage: Proprietary code or customer PII being ingested by a public LLM.

- Copyright and IP: Who owns the code or content the AI generates?

- Regulatory compliance: Violating GDPR, CCPA, or industry-specific mandates through improper data handling.

Legal often operates with a conservative “wait and see” approach. Your role is to translate their abstract fears into technical controls.

When Legal says, “We can’t have PII in ChatGPT,” you reply, “We can implement a browser extension or API gateway that automatically scrubs PII before it leaves our network.” You turn policy requirements into technical realities.

This group includes the CEO, CFO, and department heads (Sales, Marketing, Engineering). They are under pressure to perform. They read the headlines about AI productivity gains and want those results yesterday. They are often the drivers of shadow AI because they prioritize output over process. Their focus is:

- Productivity: Doing more with less.

- Innovation: Creating new features or services faster than competitors.

- Competitive advantage: Not getting left behind.

These are your accelerators.

If left unchecked, they might bypass safety protocols to get results. Your value proposition to them is stability and scale. You explain that a governed AI strategy isn’t a bottleneck—it’s a superhighway. By standardizing tools and access, you prevent the downtime and data disasters that would otherwise derail their momentum.

-

Why is IT the natural leader of this committee?

Because managing conflicting requirements is what you do every single day. You speak the language of risk to the CISO, the language of liability to Legal, and the language of ROI to the CEO. When you convene the Governance Committee, your goal is to move the conversation from “Can we use AI?” to “How do we use AI in a way that satisfies everyone?”

Practical Steps for Your Committee

-

1

Identify a Point Person

While the committee includes various stakeholders, it needs a single owner to drive the agenda. That owner should be an IT leader who has project management experience and the political capital to navigate inter-departmental friction.

-

2

Define the “Yes” Path

The committee’s output shouldn’t just be a list of banned tools. It must produce a clear process for approval. So what doe a checklist look like?

- Security: Does it support SSO? Is data encrypted?

- Safety: What are their data retention policies? Do they train their models on our data?

- Effectiveness: Does it integrate with our existing stack? Is it worth the cost?

-

3

Regular Cadence

AI moves too fast for quarterly reviews. In the early stages, meet bi-weekly or monthly. Review new tool requests, analyze shadow AI reports (generated from your SaaS management tools), and update policies based on the latest industry developments.

Once you have the people aligned, you have the mandate to act. But a mandate without a mechanism is just a document. To execute the will of the Governance Committee, you need a technological foundation capable of enforcing these decisions.

THE TECH

Finding the Right Components for an AI Control Plane

An aligned governance committee is essential, but a strategy without the right technology is merely a conversation.

To enforce the policies, manage the risks, and unlock the opportunities defined by your stakeholders, you need a unified technical foundation. This is where governance moves from a theoretical framework to an operational reality.

The right technology stack helps you to accelerate it safely. It provides the visibility to understand what is happening, the mechanisms to enforce who and what has access, and the stability to transform AI from a potential risk into a scalable, competitive advantage.

The entire structure rests on a simple but powerful framework: See, Control, and Accelerate.

SEE

Uncovering the Full Picture

You cannot govern what you cannot see. The first technical mandate is to achieve complete visibility into your entire IT environment.

With 61% of organizations reporting the use of unsanctioned AI tools, “shadow AI” is in many ways the default state. Your marketing team’s preferred generative AI writing tool, the engineering department’s new code assistant, and the finance team’s data analysis bot all represent potential blind spots.

To move from a reactive to a proactive stance, you must illuminate these shadows. This requires robust SaaS management and discovery capabilities. By implementing tools that can automatically identify every application connected to your environment—sanctioned or not—you can create a comprehensive inventory of AI usage. This is the first step toward reclaiming control.

This visibility provides the Governance Committee with the data needed to make informed decisions. Instead of guessing which tools are in use, you can present a clear report that answers critical questions:

Which AI applications are most prevalent in our organisation?

Which departments are the heaviest adopters?

What is the potential data exposure from these unsanctioned tools?

With this information, you can prioritize which tools to vet, which to sanction, and which to replace with more secure alternatives, turning unknown risks into manageable assets.

CONTROL

Centralizing Identity and Access

Visibility is the starting point, but control is where governance truly takes hold. At the core of AI security is a principle IT leaders have long understood: identity is the new perimeter. The effectiveness of your AI strategy hinges on your ability to manage who and what gets access to your resources.

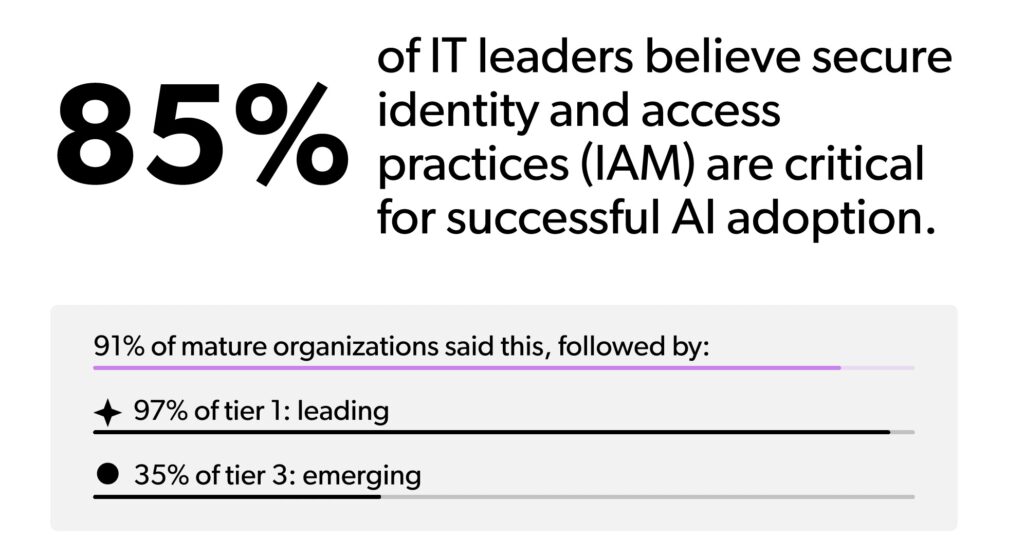

This is why 85% of IT leaders agree that a centralized Identity and Access Management (IAM) platform is critical for secure AI adoption. A unified IAM solution serves as the bedrock for control, allowing you to enforce consistent policies across every user and every application.

When you centralize identity, you can:

Ensure that every sanctioned AI tool is accessed through your secure, centralised identity provider, eliminating weak or reused passwords.

Create conditional access policies that grant or deny access based on context, such as the user’s location, device posture, or time of day.

Streamline the onboarding and offboarding process, ensuring that access to AI tools is granted on day one and, more importantly, revoked instantly upon departure.

This level of control must extend beyond the user.

As we discussed earlier, agentic AI introduces a new class of identity that requires its own set of rules. A unified foundation is critical for managing the access rights of these autonomous agents, ensuring they can perform their designated tasks without gaining unintended permissions.

By managing both human and machine identities from a single console, you create a cohesive security posture that is strong enough to support the next wave of automation.

ACCELERATE

Transforming Risk into Advantage

When you can see your entire AI landscape and control access through a unified identity core, something remarkable happens: you move beyond defense and start enabling innovation. This is the final stage of the framework.

Those that embrace AI culturally and operationally are feeling the effect. And it’s a good one. By building this unified IT foundation now—one that combines identity, access, and device management—you can move beyond the initial chaos of adoption and realize the productivity gains everyone talks about.

You can successfully operationalize AI, making it a secure, scalable, and sustainable driver of your business. This foundation gives you the confidence to treat AI not as a threat to be contained, but as a powerful advantage to be unleashed.

THE THOUGHT PROCESS

Finding the Right Way to Evolve Your Thinking

You have aligned your people through the AI Governance Committee. You have established a unified technological foundation to see and control access.

Now, we arrive at the third and final pillar.

Technology alone cannot solve the AI challenge because AI is a new kind of entity within your organization. To govern it effectively, you must evolve the way you think about key concepts like identity, trust, and autonomy.

To lead your organization through this transition, you need to revise your mental model. It starts with expanding your definition of identity, and asks you to rethink what it means to trust a system.

The 3 Faces of Identity

Identity has become the centerpiece of so many initiatives changing the IT landscape. This means the first shift in your thought process is to recognize that your environment now hosts three distinct types of identities, each requiring a specific governance approach. We mentioned this concept before, but it’s worth revisiting here.

AI is not a human. But it makes choices to accomplish its goals, a distinct difference from scripts that run on tight rails. Thus, we have a new identity type we need to consider.

Let’s look at how these three types compare:

-

1

The Human Identity (High Judgment, Low Speed)

This is the traditional user. Humans are creative, ethical, and capable of understanding context. However, they are also prone to fatigue, social engineering, and error.

Governance Focus: Authentication and Education. You verify who they are (MFA, Biometrics) and train them on safe behaviour.

-

2

The Machine Identity (Zero Judgment, High Speed)

These are your traditional service accounts, APIs, and bots. They do exactly what they are scripted to do, every single time. They never sleep, never get tired, and never deviate from the plan.

Governance Focus: Least Privilege and Rotation. You lock down their permissions to the absolute minimum and rotate their credentials frequently to prevent misuse.

-

3

The AI Identity (Variable Judgment, High Speed)

This is the new category. An AI agent is software, but it operates with a degree of autonomy that traditional scripts do not. It makes decisions. It interprets vague instructions. It can hallucinate or be tricked by “prompt injection” attacks.

Governance Focus: Guardrails and Supervision.

You cannot treat an AI agent purely like a machine because its actions aren’t fully predictable. You cannot treat it like a human because it lacks true moral reasoning. You must govern it by bounding its autonomy—giving it a sandbox where it can work, but hard limits it cannot cross.

These are the definitions. Knowing they are at the center is the start, but the solution to accelerating AI use safely, securely, and effectively will ultimately fall back on another key concept: trust.

The shifts and evolutions of how we work popularized Zero Trust, a security framework that operates on the simple premise to trust nothing, but verify everything. It puts identity at the center, developing an approach that focuses on making sure the person behind the login is who they say they are. This means we verify human identity at every access point by asking them to reach into the physical world and show proof (their physical fingerprint, or a physical fob, or a code from their physical phone).

In the context of non-human identities, since these programs can’t verify themselves the same way a human can, Zero Trust principles concerning the scope of access lead here. We still can’t trust that the human behind the script is who we think it is, so instead we monitor behavior to ensure it’s doing only what it’s been specifically designed to do. The foundational principle of least privilege is the main driver, but other controls are put in place to limit access outside of tightly defined windows of operation.

For AI, we must take this principle a step further. We must move from verifying access to verifying intent and outcome. The question of trust is less about who is using it… and more about whether or not we trust that AI will act appropriately and with sound judgement.

The Trust Trap Revisited

As discussed earlier, the “Trust Trap” occurs when we assume AI software is neutral and reliable. A traditional Zero Trust approach might verify that an AI agent has the correct API key to access a database. But that verification is no longer enough. You also need to ask: Why is it accessing that database? Is the volume of data it is requesting consistent with its typical behavior?

To evolve your Zero Trust strategy for AI, you must adopt a posture of fundamental skepticism regarding AI output. As the technology catches up to provide automated, proactive support, your job is to set the groundwork by developing policies that everyone can adhere to. These policies can cover important topics like:

-

Assuming Fallibility

Build workflows that assume the AI will eventually make a mistake. Never give an AI agent write-access to critical production databases without a human-in-the-loop or a strict rollback mechanism.

-

Containing the Blast Radius

If a human user is compromised, they can only work as fast as they can type. If an AI agent is compromised, it can exfiltrate data at machine speed. Therefore, network segmentation and micro-segmentation are non-negotiable. An AI agent used for marketing analytics should have zero network path to HR records.

-

Enforcing Lifecycle Policy Controls

Implement an AI Identity Track that treats every agent as a “digital intern”. This track enforces Just-In-Time (JIT) access and strong isolation for any agent with write-access. This way even if an agent “panics” or makes a catastrophic error of judgment—like the Replit incident—it cannot access live production environments without supervision.

The “Digital Intern” Mindset

So, how do you operationalize this new thought process? The most practical mental model for IT leaders is to treat every AI agent as a Digital Intern.

Imagine you hired a brilliant but inexperienced intern. They are eager, incredibly fast, and have access to the entire internet’s knowledge. But they don’t know your company culture, they don’t understand nuance, and they will confidently hallucinate an answer if they don’t know the truth.

How would you manage this intern?

You wouldn’t give them the keys to the server room on day one.

You would review their work before they send it to a client.

You would give them specific tasks, not vague responsibilities.

This is exactly how you must govern Agentic AI.

Probationary Access

When deploying a new agent, start with read-only permissions. Let it “shadow” the process. Monitor its logs. Only when it has proven it acts predictably do you grant it write access.

Supervised Autonomy

High-risk actions like deleting files, transferring funds, or changing configurations require a human approval step. Full stop. The AI can prepare the action, but a human must pull the trigger.

Task-Based Identity

Do not give an AI agent a broad “admin” role. Create a specific identity for that specific agent with permissions scoped strictly to the task at hand. If the agent is designing a website, it needs access to the CMS, not the CRM.

Adopting this thought process transforms your role. You become the curator of autonomy. The stereotypical “Department of NO” becomes the place employees actively seek out to learn how to get done the innovative new work they want to accomplish.

By distinguishing between the three faces of identity, evolving your Zero Trust principles, and applying the “Digital Intern” framework, you strip away the mystery of AI. It stops being a terrifying black box and becomes what it was always meant to be: a powerful resource that, with the right management, can drive your organization forward.

Turning Risk into Advantage

Whatever your personal opinion may be about the actual impact of AI, you cannot deny that it is shifting how organizations operate, innovate, and compete. Throughout this guide, we have laid out a strategic framework to navigate this transformation. This is not meant to be a defensive measure against risk. Quite the opposite. This is a deliberate plan to harness AI and turn it into your greatest advantage.

The mandate is clear. As an IT leader, you are uniquely positioned to architect this future. You have the technical depth, the cross-functional visibility, and the strategic foresight to move your organization beyond the initial, chaotic phase of adoption and into an era of secure, scalable AI operationalization.

This journey is built on three core pillars:

-

1

Team

You are the central architect who unites the key stakeholders—Security, Safety, and Effectiveness—into a cohesive AI Governance Committee. By translating their competing priorities into a unified strategy, you create the alignment necessary to move forward with confidence.

-

2

Technology

You lay the unified foundation to see, control, and accelerate AI adoption. By centralizing identity and access management, you gain visibility into shadow AI, enforce consistent security policies, and create the stable bedrock needed to support innovation at scale.

-

3

Thought Process

You evolve your organization’s mindset. By establishing AI as a distinct “third face” of identity and treating it as a “Digital Intern,” you create the mental models required to govern autonomous systems safely, applying Zero Trust principles to a new class of non-human users.

The framework we have outlined provides a path that channels the immense pressure from both bottoms-up user adoption and tops-down executive directives into a productive, secure force. You create an environment where AI can thrive in a secure, scalable, and sustainable way.

What exactly does that mean?

-

Secure

By centralizing identity and extending Zero Trust principles to AI agents, you ensure that every user and every system has the right level of access—and no more. This protects your critical data and prevents the catastrophic breaches that can arise from misconfigured or compromised agents.

-

Scalable

A unified platform eliminates the friction of managing dozens of point solutions. When your business wants to deploy a new AI tool or onboard a new team, you have an automated and repeatable process ready to go. You can scale at the speed of business, not at the speed of manual IT administration.

-

Sustainable

The AI landscape will continue to evolve at a breathtaking pace. A flexible, identity-centric foundation is built for this change. It allows you to adapt your policies, integrate new tools, and manage new types of AI identities as they emerge, ensuring your governance strategy remains relevant for the long term.

Ultimately, this is how you turn the AI mandate into a career-defining opportunity. You move from being a reactive manager of technology to a proactive enabler of business strategy.

The JumpCloud Advantage: A Unified Foundation for the Autonomous Workforce

The core principle underpinning this entire framework is unification. Siloed systems for identity, device management, and access control are simply too slow and too fragmented to govern the autonomous workforce of the future. Our research reinforces this, showing that 46% of IT leaders believe IT unification is “critical” for scaling AI securely.

JumpCloud provides a single, unified platform designed for this new reality. It is the only solution capable of consolidating the management of every identity—human, non-human, and agentic—and every device, regardless of its operating system.

With JumpCloud, you can:

See everything by discovering and managing SaaS applications to eliminate shadow AI.

Control everything through a centralised identity provider with robust, conditional access policies for users and AI agents alike.

Accelerate everything by automating the entire identity lifecycle and providing a secure foundation for innovation.

Love it, hate it, or both… you cannot fight against the influence of AI. What your organization needs to someone to build the channels to direct its power.

That someone has to be you.

By establishing a unified foundation now, you can confidently lead your organization forward, transforming the promise of AI into a tangible and lasting competitive advantage.

Is Your Organization AI-Ready?

Tool adoption is one thing. Foundational AI-readiness is another. See how your practices stack up to your peers—and how to evolve next.

Take Your Assessment Today