Remember shadow IT? It was the bane of every IT admin’s existence a decade ago. Employees bypassed IT to download whatever app they fancied to “get work done,” leaving a mess of security holes and compliance nightmares in their wake.

Well, history is repeating itself. But this time, it’s smarter, and potentially much more dangerous.

Welcome to the era of shadow AI.

Shadow AI happens when employees use unsanctioned artificial intelligence tools without IT’s knowledge or approval. As CIO, you are accountable for understanding how this starts and why it puts your organization at risk.

It often starts innocently enough. A marketing manager wants to write copy faster, or a developer needs a quick code snippet. They find a free AI tool, paste in some company data, and… the job is done. But the data has left your secure perimeter, and you have no idea where it went.

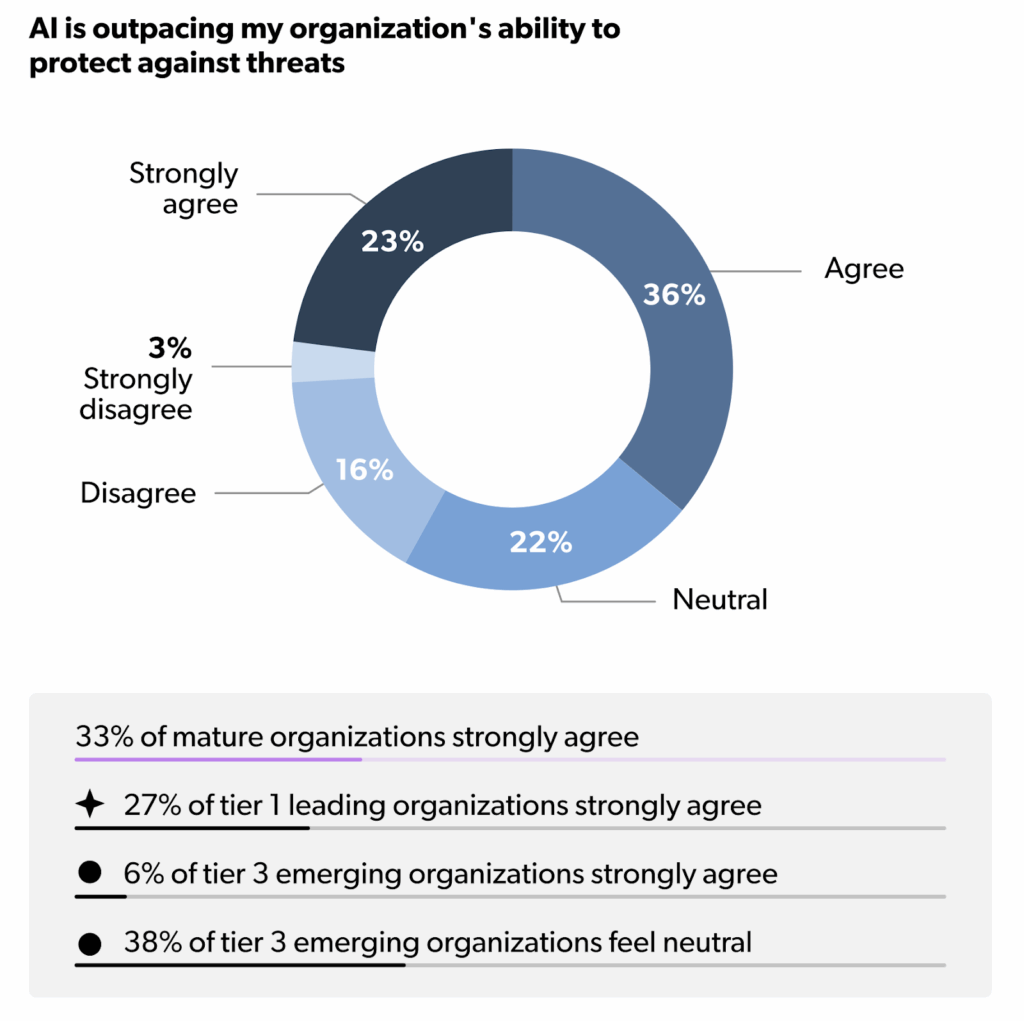

For CIOs, you look at this situation differently than most. The speed at which AI is being adopted is outpacing your ability to secure it. It may sound extreme, but if you don’t act now, you risk losing control of your data and your infrastructure.

We recently released our latest industry report, The Dual Disconnect: Why Your AI Maturity Now Fails to Scale, which uncovers insights about AI strategies and their challenges. Among its many findings, the report highlights the gaps between AI adoption and security, pointing out where businesses may struggle to scale their AI maturity.

In this article, we’ll dive into some of that data to help shine a light on why shadow AI can create unacceptable levels of risk, and explore the ways you as the leader of IT can address them.

The Risks of Shadow AI

The problem with shadow AI isn’t just that people are using tools you didn’t approve.

It’s about what they are feeding those tools.

When an employee pastes sensitive customer data, proprietary code, or financial figures into a public AI model, that data can be ingested to train the model. If this happens, it effectively becomes public knowledge. This creates massive risks for data privacy, intellectual property, and regulatory compliance.

Data supports this concern. According to our most recent industry findings, 61% of organizations report encountering unsanctioned or unmonitored use of AI tools. That is a staggering number. It means in nearly two-thirds of companies, IT leaders know there are tools operating in the dark.

The risks are tangible:

- Data leaks: Your intellectual property could unknowingly be shared with competitors or the public.

- Compliance violations: Using unvetted tools can violate GDPR, HIPAA, and other strict data privacy laws.

- Security gaps: Unsecured tools can act as entry points for bad actors.

These risks are always present in an organization, but shadow AI makes them worse. When your employees use tools that you don’t know about, your ability to contain threats is actively hindered. The consequences become much more difficult to manage.

Perhaps most concerning is that 60% of IT professionals agree that AI is outpacing their organization’s ability to protect against threats.

You are in a race, and right now, the technology is running faster than your defenses.

The CIO’s Role in Addressing The Risks

Mitigating the risks of shadow AI requires decisive, strategic actions from the CIO.

You are the one responsible for setting clear expectations on what the right controls are to protect your organization. This means looking beyond basic tool approval and taking ownership over governance, risk management, and education across your teams.

While your team is on the ground working with stakeholders and processing requests, as a CIO your responsibility is to:

- Stay alert to the flow of sensitive and proprietary data, ensuring it never enters unsanctioned platforms.

- Lead efforts to systematically uncover where AI tools are being used without oversight.

- Prioritize the creation and enforcement of policies that make clear what is and is not allowed regarding AI use.

- Bridge communication gaps between IT, security, and business units, so everyone understands how Shadow AI can impact compliance, privacy, and brand reputation.

- Advocate for investments in solutions and processes that give your teams visibility into AI activities organization-wide.

By claiming and acting on these responsibilities, CIOs are best positioned to identify risks early, close gaps in oversight, and maintain control, even as AI adoption accelerates.

What CIOs Must Do Now

Your response to shadow AI sets the tone for technology adoption and risk management across your entire organization. This is your ultimate opportunity.

Your leadership is necessary to guide teams, create alignment, and implement the controls that keep data secure. You shouldn’t simply block AI. Instead of trying to stop the tide, as CIO, you must build the channel for it to flow safely.

Here are three actionable steps you can take immediately to bring shadow AI into the light.

1. Centralize Your Identity and Access Management (IAM)

Visibility is your best defense. You cannot secure what you cannot see. The most effective way to gain visibility is to control who has access to what.

85% of IT leaders agree that secure IAM practices are critical for successful AI adoption. By centralizing identity management, you ensure that every login, every access request, and every tool usage is tied to a verified identity. This applies to human users and non-human identities (like bots).

When you control identity, you control entry. You can enforce policies that restrict which tools can be accessed and by whom. If an employee tries to sign up for an unapproved AI tool using their corporate credentials, a centralized system can flag or block that attempt.

2. Formalize Governance and Policy

“Shadow” doesn’t imply a lack of rules. But if you don’t tell employees what is allowed, they may assume everything is allowed.

You need clear, written policies regarding AI use. These policies formalize the rules of engagement, explaining what employees can and can’t do with AI tools. They also establish processes to enforce those rules and create pathways for safe AI adoption.

A comprehensive AI policy should define how to vet and review new tools, how employees can make requests, and what justifications are needed. It also serves as an educational tool, helping employees understand the implications of using AI.

At the end of the day, the goal with formalized policies is to help everyone operate from a shared understanding of security and compliance. But a PDF handbook isn’t enough. You need technical enforcement.

Almost half of organizations agree that IT unification is critical for scaling AI safely. Why? Because current controls often operate in silos, leading to gaps and inefficiencies that put AI systems at risk. Unification addresses this by creating a cohesive framework, ensuring consistent enforcement and better risk management.

Unifying your IT environment ensures consistency across every major aspect of your operations. This makes your organization more secure, more efficient, and easier to manage. When identity and device management are intertwined, your policies are more effective because you have greater certainty that the right people are using the right tools safely.

Tip:

Your governance strategy should include:

- Approved tool lists: Clearly define which AI tools are sanctioned for business use.

- Data handling rules: Explicitly state what types of data (e.g., PII, financial data) are never allowed in AI prompts

- Browser extensions: Use browser management policies to block extensions that scrape screen data or inject AI into web forms without permission.

3. Build a “Safe Use” Culture

Technological controls are vital, but the human element is just as important.

Most employees aren’t trying to be malicious… they just want to be efficient. This is why CIOs must lead the shift from “blocking” to “enabling.”

Provide your teams with secure, enterprise-grade versions of the tools they want to use. If they are using a chatbot, give them a sanctioned one that guarantees data privacy. They get what they want, and so do you. And it becomes a discussion about how to use AI, rather than whether or not to.

Investing in training is also non-negotiable. Teach your teams why shadow AI is dangerous. Show them how a simple copy-paste can lead to a data breach. When employees understand the risk, they become partners in security rather than liabilities.

Leading the Charge

Shadow AI is not going away. As AI tools become more powerful and accessible, the temptation to use them “off the books” will only grow.

As a CIO, your mandate is to turn these new risks into opportunities for your organization. Your guidance is critical.

Set a clear direction for responsible AI use by building unified governance, aligning teams, and holding every stakeholder, including yourself, accountable for compliance and results. Revisit your IT foundation regularly, keep controls in sync with how AI is deployed and managed across the business.

According to our recent industry survey, while 61% of organizations are struggling with unmonitored tools, they also see significant potential for growth. Get your copy of The Dual Disconnect: Why Your AI Maturity Now Fails to Scale to learn about both sides of the AI coin. You’ll walk away with practical insights from your peers on how to think about turning these risks into a competitive advantage.